🕶️ Zuckerberg's New Vision

01: Meta Adds AI To Everything

Facebook's rebrand to "Meta" signified a shift in focus towards the metaverse, a shared digital universe. But beyond this concept, a critical factor in their strategy is the integration of AI across their ecosystem. Zuckerberg 'won' the internet last week with his release of all the new AI endeavors coming to life, and here's what they mean for both users and businesses.

Meta's AI Expansion

From Oculus headsets to wearables, Meta is infusing AI into nearly all its devices and platforms. This strategic move intends to create a seamless and immersive user experience. The goal? To craft a digital world as intuitive and interactive as the physical one.

The latest Oculus headset is more affordable and boasts impressive features. Users can expect unparalleled visual clarity with a full-color passthrough tech that has ten times as many pixels as its predecessor. Coupled with a 110-degree field of view and powered by the Qualcomm Snapdragon XR2 Gen 2 chip, the Quest 3 promises an immersive experience like no other.

AI Initiatives Galore

Meta's AI focus isn't limited to its Oculus range. The company announced a handful of AI initiatives, including:

Emu: A foundational model for AI image generation.

Meta AI: Personalized AI assistants are integrated across Meta's products, making interactions more innovative and customized.

Celebrity AI Chatbots: Imagine chatting with your favorite celebrity. With Meta's AI, this dream is close to reality.

AI Studio: A platform designed for businesses, allowing them to build AI chatbots tailored for Meta's suite of products. This initiative hints at Meta's broader strategy to make the metaverse business-friendly.

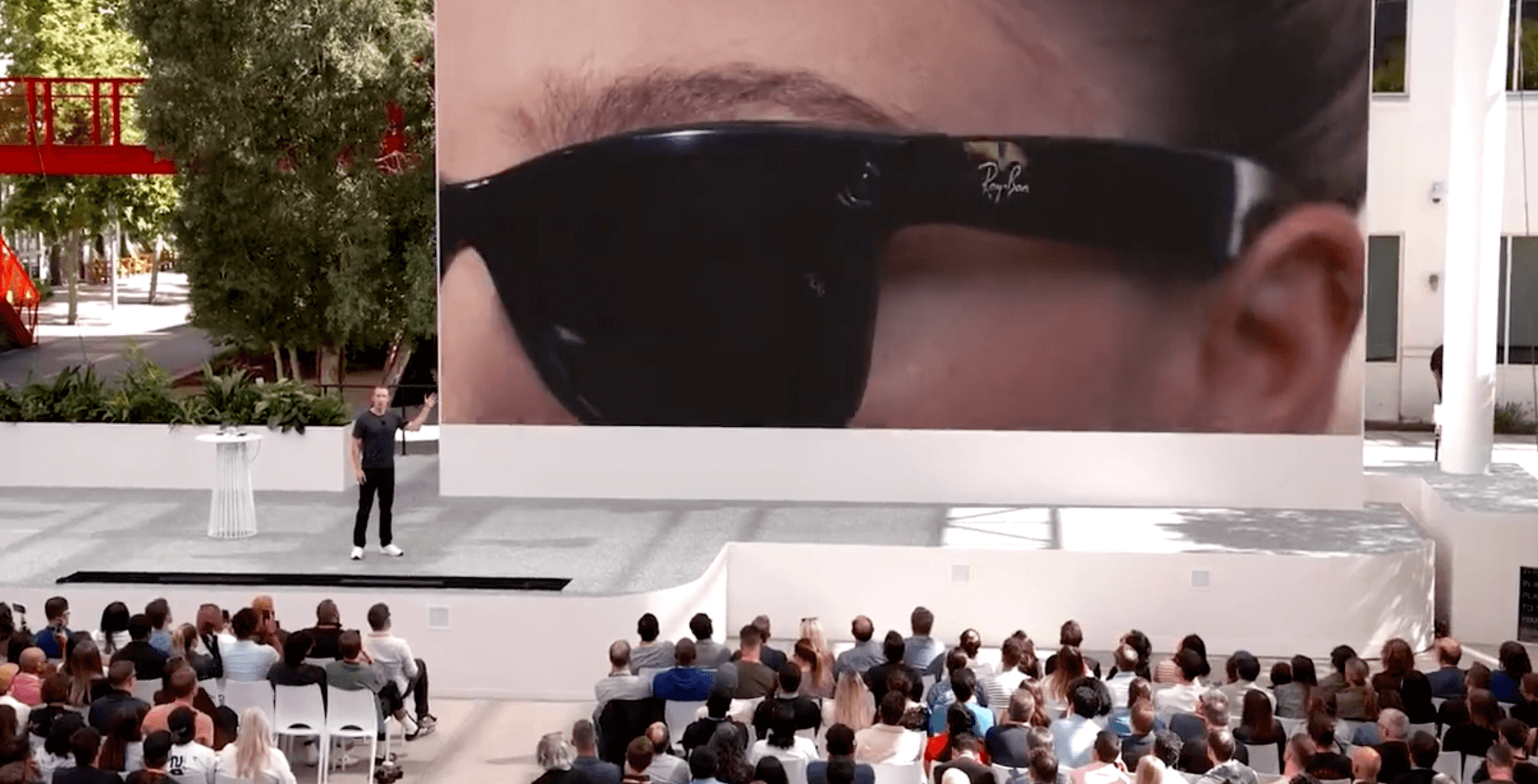

RayBan Meta Smartglasses

For those who value style as much as tech, the RayBan Meta Smartglasses announcement is exciting news. These glasses aren't just a fashion statement; they're a peek into the future of wearables 🔗. With plans to go multimodal next year, these smart glasses will interface with the user's environment, providing real-time information, photo and video recording, music, and more.

Meta's announcements underscore a clear message: The future is AI-driven, and the metaverse is its playground. The line between the digital and physical worlds is set to blur even further.

And as for the RayBan Meta Smartglasses? My RayBans are getting old, and I might get a pair. How about you?

02: OpenAI Goes Multimodal

OpenAI is on a roll, and the recent developments have the tech world buzzing. A week after the impressive DALL-E 3 announcement, GPT has taken another leap forward by incorporating image recognition.

The result? An AI that's not only text-savvy but visually intelligent. The tech community has showcased its capabilities, and the outcomes are powerful. Check out a few 🔗.

But that's not all. OpenAI has enhanced the conversational experience with ChatGPT by introducing lifelike voice models. These models aren't just recognizing and generating speech; they bring emotion, pause, and emphasis into the conversation. The experience is so authentic that it feels like you're having a real verbal dialogue with a human.

More, you ask? Remember the days when ChatGPT could surf the web? We're back, baby! OpenAI has reintroduced web browsing, eliminating the data cut-off from November 2021. Whether for technical research, product reviews, vacation planning, or more, browsing is available to Plus and Enterprise users. Still, the good news is that it's set to roll out to all users shortly. Select "Browse with Bing" under GPT-4, and you're ready to go realtime.

A Recap of OpenAI's Whirlwind Week:

DALL-E 3 made its grand debut.

Multimodality was introduced with GPT-4V.

CEO Sam Altman playfully hinted that AGI has been "achieved internally" on Reddit.

OpenAI's valuation soared, with rumors suggesting a $80-$90 billion range.

The much-missed ChatGPT with internet browsing feature made its comeback.

With such rapid advancements, it's clear that OpenAI is not just shaping the future of AI; it's redefining it. As users and enthusiasts, we're just along for this ride.

03: AI & Breaking Language Barriers

A few weeks ago, I covered the advancements of HeyGen's AI Translation services 🔗. The realistic translations left me thinking about the vast amount of valuable content locked within the confines of a single language. At the same time, eager searchers of the same content from across the globe are looking for it in their native dialog.

As a case study of why this matters, take my friend and client, Lewis Howes, and his thriving YouTube Channel 🔗 that boasts 3.1 million subscribers. His channel is a treasure trove of knowledge encompassing many topics, from relationships, mindset, health, finance, and more. But what if you don't speak English and want to learn from the experts he has on his show? Recognizing this gap, Team Greatness launched a few dedicated international channels on YouTube, and they are incredible:

Lewis Howes in Español 🔗 - 904k Subs

Lewis Howes in Português 🔗 - 220k Subs

Our vision for 2024 is to scale these efforts further. The underlying principle? Accessibility matters, and AI only makes it easier to grow faster and more extensive.

Guess who else understands the value of accessibility and has one of the largest content marketplaces on the internet?

Spotify.

Spotify is pioneering an AI voice translation 🔗 pilot to make podcast content globally accessible. This initiative ensures that your favorite podcasters might soon resonate with you in your native language. Spotify recognizes that for content to be universal, it must transcend language barriers. By harnessing AI's potential, Spotify sees a world where language no longer confines content. It's a world where every piece of content finds its listeners, no matter their language.

🧠 Now It's Your Turn, and here's my challenge for you: Reflect on your content assets.

What do you possess that could captivate new audiences with the simple addition of language translation? The future is multilingual. Are you ready?

Enjoy this edition?

Get CTRL+ALT+BUILDTM delivered to your inbox every week.